Back to Basics: Tracking Web Page Performance

Data Intelligence · Apr 12, 2018

As a User Interface (UI) Developer, my goal is to deliver an awesome digital experience, every time. I’m not just talking about code quality; that’s implied. I’m talking about ensuring greatness in every aspect of the experience that I’m building.

I bring the digital experience to life. I assemble all the pieces – the visual design, the written word, and the interactions - to create what end users will ultimately experience. I am the first user. The guinea pig. And the guardian of quality. Increasingly as a UI developer, I see my role as User Advocate. Would this web page get the job done for me? If not, back to the drawing board.

So, what does a quality user experience look like? This is the timeless, gazillion dollar question, right?

At TELUS Digital, my place of employment and my passion, we have clearly defined metrics around quality. This starts with what we call “digital basics” - qualities universal to every digital experience we create. The good news is measuring quality is pretty easy, and with meaningful implications. Hooray! Who doesn’t love easy.

I regularly remind my team that users don’t care how we do the things we do. When I browse a web page, I don’t ask “What is the tech stack?”. Never. Or, “did the SEO team and Content Writers sync up to discuss this content?” Nope. “Was there any user testing?”. Of course not. As an end user, that’s not my job nor my intent. Rather, I expect to search, find the stuff I want, complete my action, and get back to my day, in short order.

Now, that isn’t meant to under-value the importance of our collective work. Rather it’s a reminder, that as designers and implementers of digital journeys, a job well done should result in an effortless experience that answers the intent of the user quickly.

Notice how I constantly refer to the “user”, as in “User Interface” and “User Experience”. As a starting point for defining quality, be the user not only the technician. That will serve you well in defining your baseline performance metrics.

The cornerstones of web page quality

At TELUS Digital, every online experience we create is measured against 5 Basic Criteria, namely:

Content: that’s the reason you’re here, isn’t it?

Accessibility: every visitor deserves a quality experience

Page Speed: faster is better. Let me get on with my life.

Search Engine Optimization (SEO): If users can’t find us on search engines, we might as well go back to print. Maybe a little overstated, but you get the point. We want to be discoverable everywhere a user starts their journey. Search engines represent the starting point for most.

Analytics: How else do we know how well we’re doing? Try something. Measure the results. Rinse. Repeat.

Of course, like any mature digital team, we have many additional Key Performance Indicators (KPIs) to evaluate our user experiences - bounce rate, conversions, etc - but these are focused on specific user journeys. Our basic performance indicators (BPIs – now I’m just making stuff up) are universal to every digital experience we create. Or more to the point, if we don’t satisfy these, our page-specific KPIs are guaranteed to underperform.

How do we measure the basics?

Before I delve into our secret sauce, let me give you some context. TELUS Digital is a team of ~300 digital pros encompassing many disciplines. Our mandate is to establish digital best practices and to create earth shattering user experiences to support TELUS - one of Canada’s largest telecommunications companies. Our work directly impacts 1,000,000s of users every day. That’s a big responsibility. My anxiety is rising just thinking about it.

About 1-1/2 years ago, we embarked on a mission to re-platform and consolidate our myriad of tech stacks. Like most large organizations, we have a legacy of acquisitions and trials-and-errors [and successes], coupled with external forces such as changing technologies and user expectations. Ultimately, we reached a tipping point. It was decided the best way to serve our users was to tear the building down, and start net new. No small feat.

Turns out, the technical challenges have been the easy part. This has been an exercise in change management – building a jet plane while flying it too. There are lots of moving parts and keeping an eye on the prize isn’t always easy.

If you’re wondering, the TELUS Digital Platform is based on Node.js, modular ReactJS (universal), Docker, OpenShift, continuous integration, [increasingly] continuous deployments, and Adobe products for our analytics. Of course, there is a multitude of libraries and tools in-between, but you get the idea. We’re doing cool stuff on a grand scale, and iterating at lightning speed. Most start-ups would be envious of the speed at which we move, and we support a 30+ billion-dollar enterprise.

Now for the simple, yet profound question: has all this re-platform stuff actually created value for our customers? That’s the goal, right? Making digital experiences better for our users? Specifically, in all the awesomeness we think we’ve achieved, did we actually improve on the basics: page speed, accessibility, content, SEO, and analytics? How can we be certain?

Step into the Light[house]

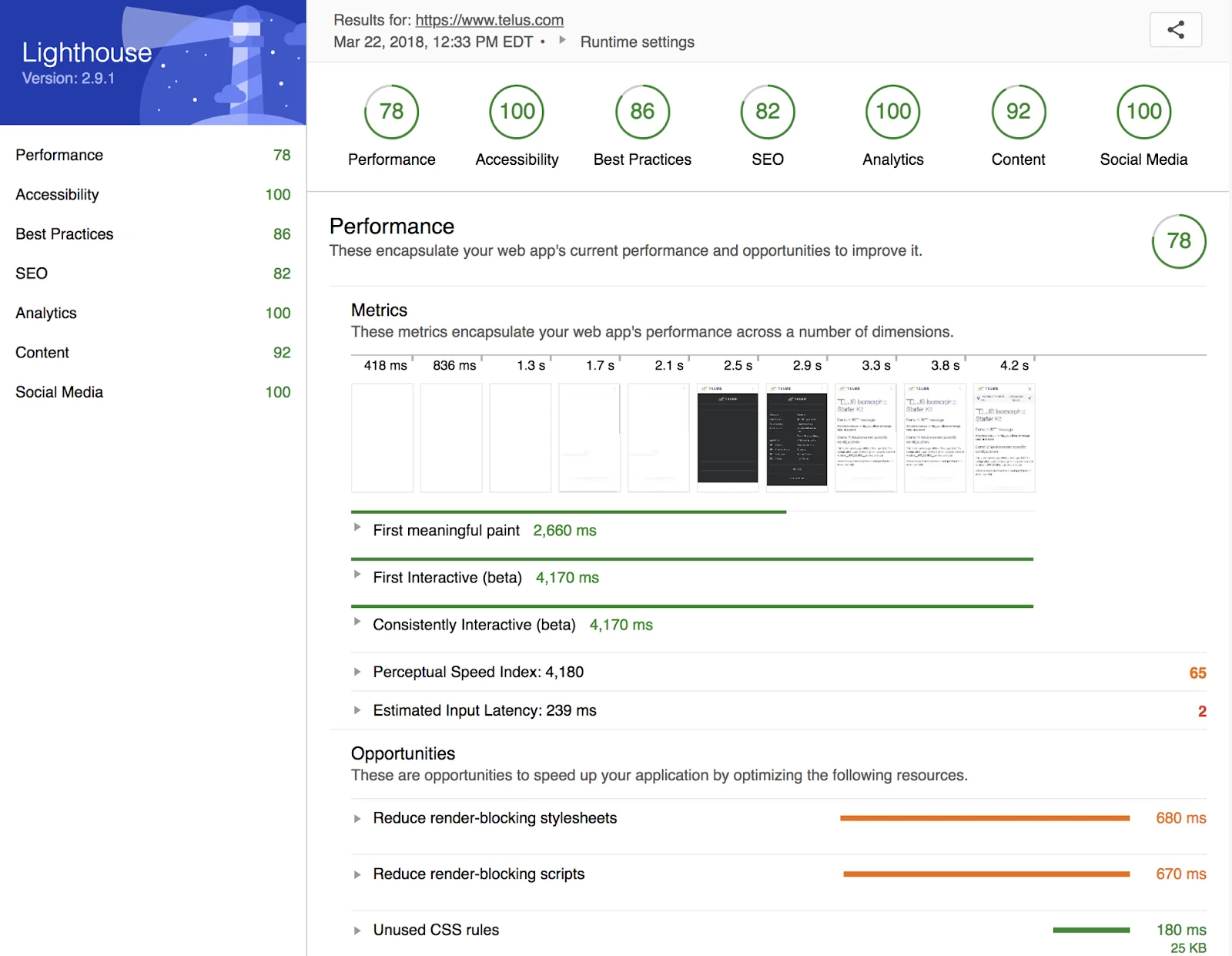

Lighthouse is an open-source project created and maintained by Google. At its core, it’s a framework for the automated testing of mobile web apps [but not limited to]. When Lighthouse first hit our radar, in the spring of 2017, we were immediately intrigued.

We were especially excited by Lighthouse’s Page Speed tests. Testing page speed, in a reliable and in a meaningful way, is just plain hard. Suffice it to say, we were happy to leverage Google’s great work here and to standardize on their key metrics, namely: time to Meaningful Paint and time to First Interactive. In essence, (1) when do I see useful content on the page and (2) when can I interact with it.

We were equally excited by the extensibility of the Lighthouse framework - it’s easy to add custom web page audits. In fact, we’ve extended Lighthouse to test all our basic performance criteria. We’ve built custom audits for SEO, Content, Analytics, and Social Media. Google has subsequently added SEO audits of their own, many of which we were pleased to inherit. Many thanks to Paul Irish, Addy Osmani, and all the Lighthouse contributors - we’ll be sending some goodness back to you in the near term.

Lighthouse is embedded in our workflows

So far, we’ve covered the basic metrics we use to identify quality across our digital experiences. And we’ve introduced one key tool in our QA arsenal to provide detailed insights into our progress on the web, namely Lighthouse. Here’s how it all comes together.

Git Hooks:

When developers attempt to merge their work into the master codebase, we run Lighthouse against all of their web pages - Google’s audits plus our own TELUS-specific tests. If our quality standards are not met, the merge fails. It’s that simple. Quality assured.

Each category of our Lighthouse tests has a minimum threshold that must be satisfied: e.g. Performance score of 80 or the merge fails. We don’t diminish the quality of the master codebase, ever. That’s uncivilized.

Delivery Pipeline:

In addition to local development, we run Lighthouse at every stage of our delivery pipeline. When code is merged, a build is triggered, and pending the outcome of test results, code is deployed to various environments throughout our delivery pipeline (i.e. testing, staging, and production).

Before code is moved from one environment to the next, however, we run unit tests, E2E tests, and you guessed it, Lighthouse. If any of these tests fail, the build fails. Again, we aim to provide an exceptional user experience, every time. Controlling builds in this way ensures nothing slips through the cracks en route to production.

Production Crawler

To keep tabs on the quality of pages our customers are browsing, we run Lighthouse against [almost] every URL in production; the results of which are surfaced in our corporate analytics dashboards. This ensures everyone in our organization has visibility into the baseline quality of our web experiences.

Armed with these insights, Product Owners (POs) and other stakeholders can objectively prioritize their backlogs and continue to improve on the basics, sprint over sprint. And as we improve, we raise our quality thresholds.

How Important are the Basics at TELUS Digital?

Customer satisfaction is a big deal at TELUS. We take great pride in delivering best-in-class experiences for all our users. It is our reason for being.

In fact, “Quality over Speed” is a core value at TELUS. It’s even built into our performance scorecards! Every team at TELUS Digital is evaluated, in part, by their performance across our basic quality metrics: i.e. page speed, content, SEO, accessibility, and analytics. And Lighthouse is how we report on it.

Out of the box, Lighthouse provides detailed reporting on a host of key metrics, most notably page speed. Additionally, the ease with which we can extend it to include our own quality concerns, and integrate it into our existing workflows, has made it an invaluable tool within our organization. It serves as a constant reminder of our goals and provides detailed insights into our progress and areas for improvement.

As an organization, we aspire to greatness. As a UI developer, I have big role and responsibility in delivering that promise. Our users expect it. We demand it of ourselves. And Lighthouse has proven to be an essential tool in helping us achieve those goals.

Want to be notified every time we release a blog post? Follow us on social media: Twitter | Instagram | LinkedIn